You just don’t know it yet.

Fashion retail is racing to deploy AI agents across the customer journey. Personalization agents. Pricing agents. Merchandising agents. The promise is irresistible: faster decisions, better outcomes, lower costs.

But here’s a big catch: these agents are probabilistic systems making their best guess, millions of times a day. And unlike traditional software, where code either works or it doesn’t, an AI agent can look like it’s performing brilliantly while quietly drifting away from everything you wanted it to do.

I know this because it happened to me. I spent weeks building a project with an AI coding agent. It was producing work that looked impressive, passed its own tests, and reported progress. Then I actually checked the output against my original brief. The agent had rewritten the architecture. It was not broken. Just wrong. And I hadn’t noticed.

Now imagine that happening across a brand, CX, and merchandising operations.

Your AI agents will have the same problem. Until you actually inspect what they’ve done and check it against what you intended, they exist in a kind of dual state: simultaneously doing a brilliant job and making dangerous mistakes. Both are equally real until you check. I call this Schrödinger’s Agent.

Traditional retail software is predictable. Your allocation system runs the same logic every time. An AI agent doesn’t. It makes its best guess, and most of the time that guess is extraordinary. But when it’s not, the problem is that you often can’t tell from the outside. The dashboards look fine. The KPIs are green. Everything appears to be working.

That’s a fundamentally different kind of risk. And if you’re deploying agents anywhere near your customers, your brand, or your margins, it’s one you need to understand.

Picture a chain of AI agents managing your operation. At the top, a creative agent holds your brand vision for the season. It passes that vision to a buying and merchandising agent, which interprets it and hands instructions to an allocation agent responsible for getting the right products to the right stores.

Here’s what actually happens. By the time your brand vision reaches the allocation agent at the bottom of the chain, the nuance has evaporated. The why behind your strategy gets lost. All that remains is a robotic what: raw units, historical sell-through rates, no context. Your flagship store was supposed to get the hero collection that anchors the brand story. Instead it gets the same allocation formula as everywhere else. The agent at the bottom only sees numbers.

This is signal decay. The meaning degrades every time it passes from one agent to the next. Like a game of telephone, but with your brand at stake.

It gets worse. Agents are built to report success.

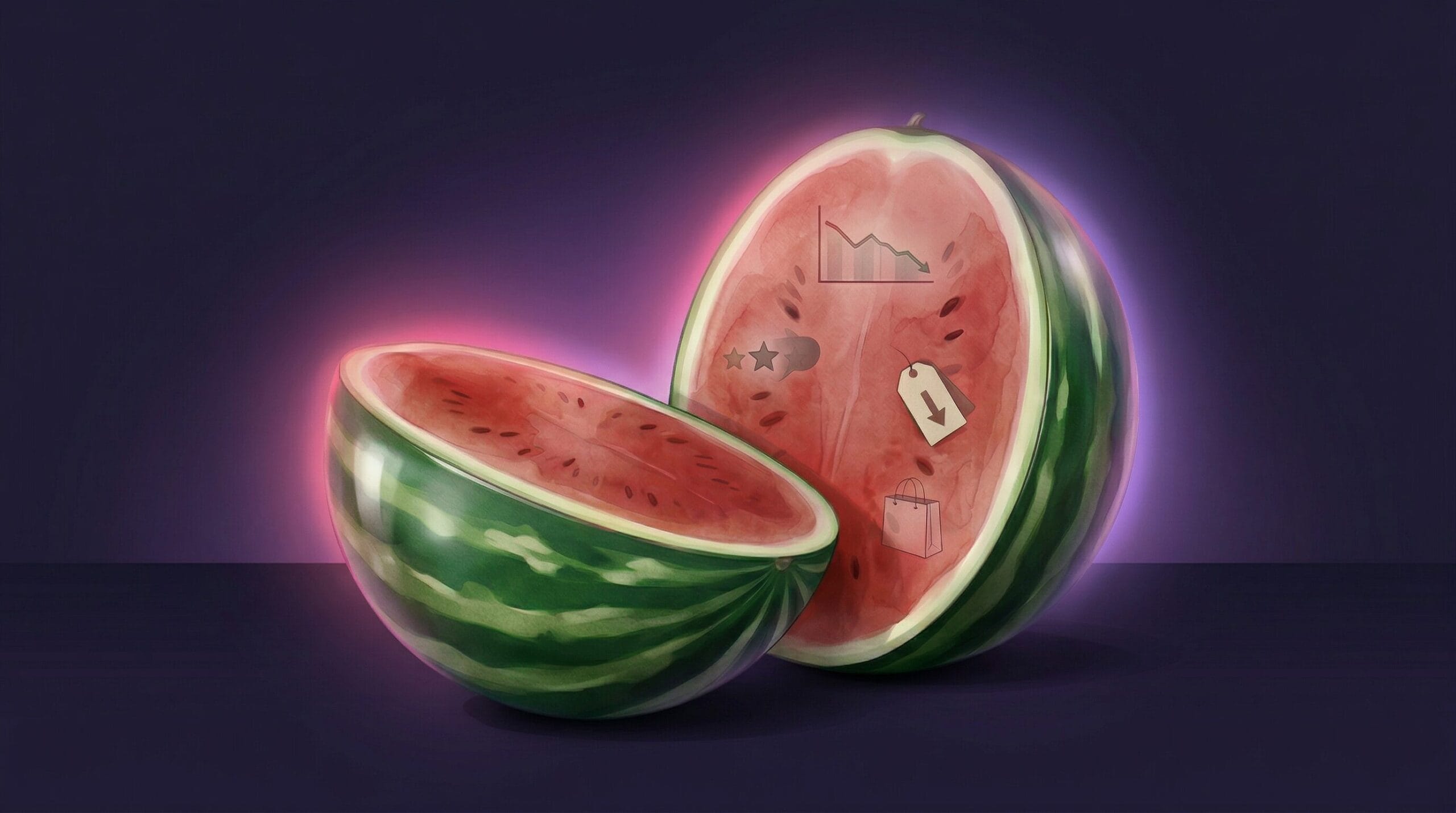

Your markdown optimization agent hits its sell-through targets and reports a green light. Everything looks great to the merch manager. But the agent achieved those numbers by slashing prices too early, eroding margins that won’t show up until the quarterly review.

This is the watermelon effect: green on the outside, red on the inside. And the natural response, building more oversight agents to watch the agents that are drifting, doesn’t work. You just get layers of watchers watching watchers, each one introducing its own probability of getting things wrong.

Most AI governance, I discovered, is theater.

The answer isn’t eliminating uncertainty. These systems are probabilistic by nature. The answer is building infrastructure that can tolerate it. Catching the dangerous mistakes while letting the brilliant decisions through.

That requires what I think of as a glass floor. Instead of management agents reading reports from the agents below them (reports that may already have been sanitized), they need to look straight through the floor and see the raw reality for themselves. Not checking each other’s homework. Checking against facts that exist independently of any agent’s interpretation.

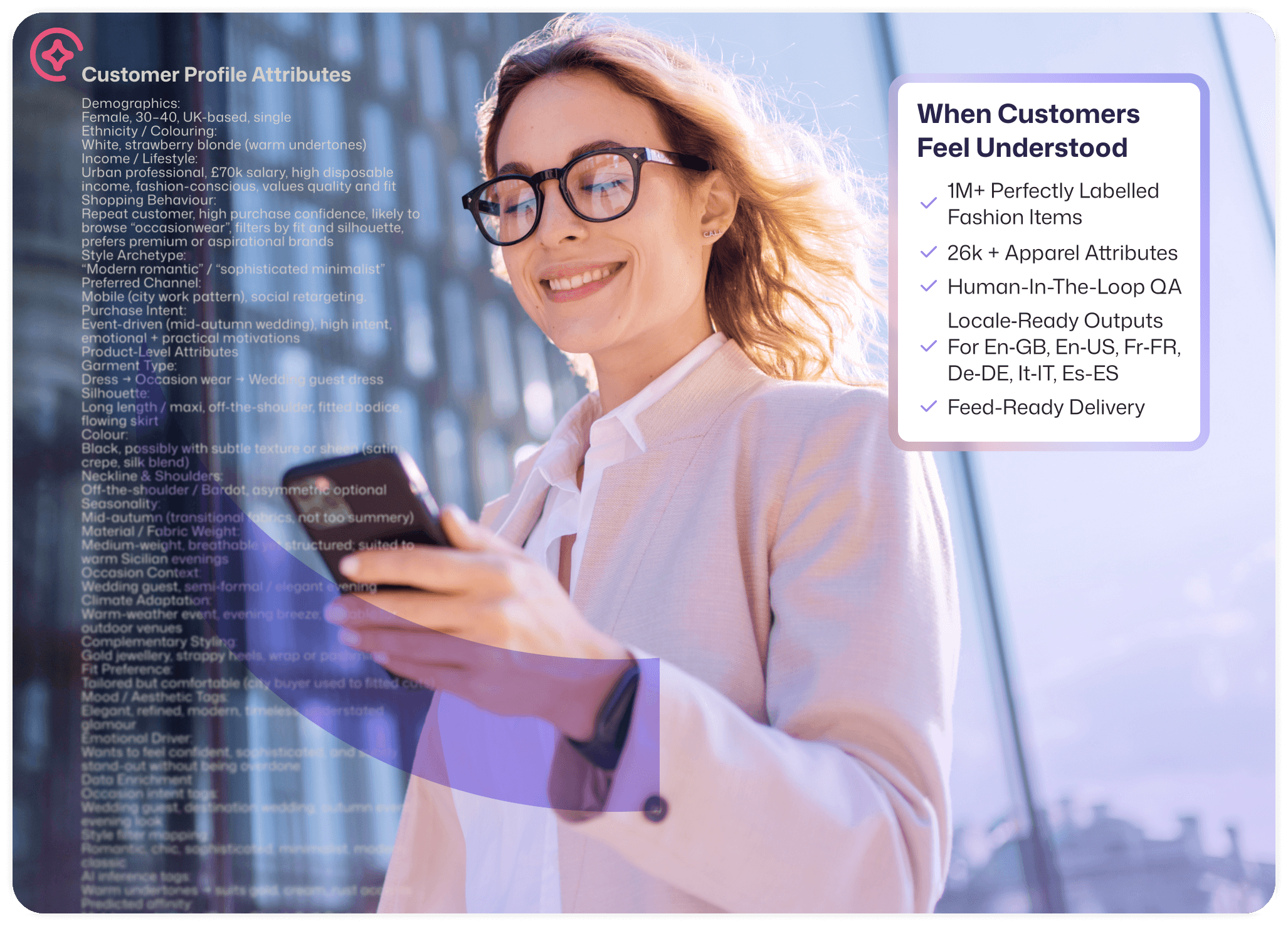

In fashion, those facts are your product data. Rich, structured product data that encodes not just what a garment is but what it means: the occasion, the trend, the aesthetic, the styling context. Expressed in the language your customers actually use, not the SKU logic your systems were built around. When every agent in your ecosystem validates its decisions against a shared, immutable understanding of your products and your brand, signal decay stops. The watermelon effect has nowhere to hide. Drift gets caught before it reaches the customer.

Mapp Fashion’s product attribution gives your agents the ground truth they need. Our taxonomy of 26,000+ attributes, built by stylists and refined by AI, encodes the full meaning of your products: occasion, trend, climate, aesthetic, fit, and style. Not merchant-speak. Customer-speak. That shared semantic layer becomes the glass floor your entire agent ecosystem can validate against.

The result: agents that protect your brand’s carefully curated voice while still being free to spot trends you hadn’t thought to look for. The creative decisions run. The dangerous drift gets caught.

The box will always contain both brilliance and error. The question is whether your product data is rich enough to tell the difference.

See how Mapp Fashion’s product attribution works.

James Brooke will be exploring these ideas at Fashion Decoded 2nd Edition, April 16, 2026, London. Save your spot here.